Can ChatGPT diagnose you? New research suggests promise but reveals knowledge gaps and hallucination issues

The use of generative artificial intelligence like ChatGPT for medical diagnosis has been on the rise as people seek quick answers to their health concerns. A recent study published in the journal iScience aimed to test the accuracy of ChatGPT in providing medical information, with surprising results.

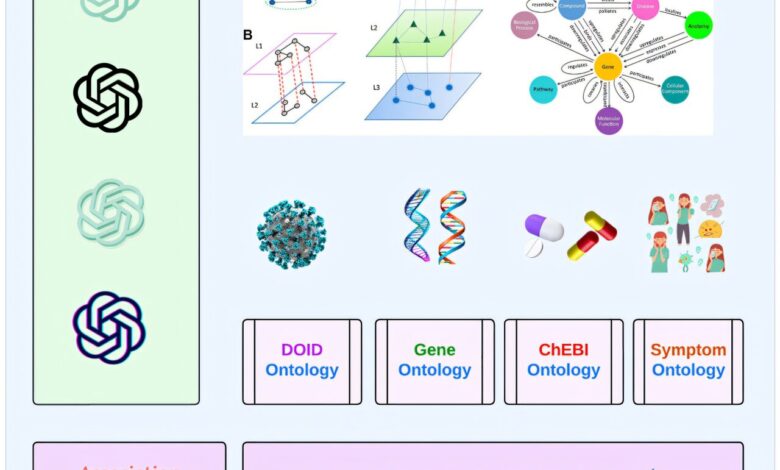

Led by research fellow Ahmed Abdeen Hamed from Binghamton University, the study involved testing ChatGPT’s ability to identify disease terms, drug names, genetic information, and symptoms. The AI performed well in identifying disease terms, drug names, and genetic information with high accuracy rates ranging from 88% to 98%. This was a notable finding considering initial skepticism about the AI’s capabilities.

However, when it came to symptom identification, ChatGPT’s accuracy was lower, scoring between 49% and 61%. One reason for this discrepancy may be the informal language used by users when describing symptoms, as opposed to the formal terminology used in medical literature. Hamed noted that ChatGPT’s use of more casual language could be a factor in this lower accuracy rate.

One concerning result from the study was the AI’s tendency to “hallucinate” genetic information when asked for specific accession numbers from the National Institutes of Health’s GenBank database. Hamed highlighted this as a significant flaw that needs to be addressed to improve the overall accuracy of ChatGPT in medical diagnostics.

Despite these challenges, Hamed sees potential in improving ChatGPT by integrating biomedical ontologies to enhance accuracy and eliminate hallucinations. His goal is to shed light on the limitations of large language models (LLMs) like ChatGPT so that data scientists can make necessary adjustments to enhance their performance.

Hamed’s research underscores the importance of verifying the accuracy of AI-generated information, especially in critical areas like healthcare. By addressing the knowledge gaps and issues like hallucinations, AI tools like ChatGPT can be refined to provide more reliable and trustworthy medical insights for users.

For more information on this study, you can refer to the article “From knowledge generation to knowledge verification: examining the biomedical generative capabilities of ChatGPT” published in iScience in 2025. DOI: 10.1016/j.isci.2025.112492.

This research was conducted by Binghamton University in collaboration with AGH University of Krakow, Poland; Howard University; and the University of Vermont.