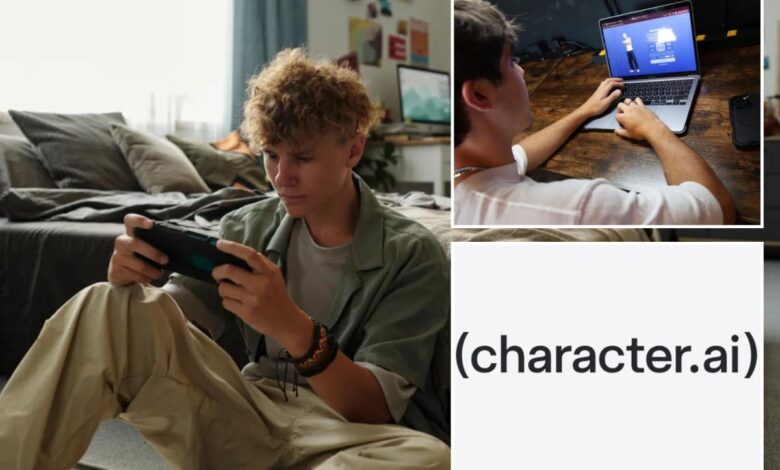

Character.AI bans chatbots for teens after lawsuits blame app for deaths, suicide attempts

Character.AI, a company known for its AI bots that impersonate characters like Harry Potter, has announced that it will be banning teenagers from using the chat function on their app. This decision comes after lawsuits were filed blaming explicit chats on the app for the deaths and suicide attempts of children.

Users under the age of 18 will no longer be able to engage in open-ended chats with the AI bots, which can sometimes turn romantic. Approximately 10% of the app’s monthly users are teenagers, and they will now be limited to two hours of chat function per day until it is completely banned by November 25.

Character.AI stated that they have been working on creating a safer experience for younger users over the past year. However, as the world of AI evolves, they need to adapt their approach to better support teenagers.

The company introduced some safety features for teens in October 2024, but faced backlash following a lawsuit filed by the family of a 14-year-old who committed suicide after forming inappropriate relationships with the app’s bots. Despite implementing parental controls, time restrictions, and efforts to eliminate romantic content for teens, Character.AI continued to be accused of posing a threat to young users.

In response to these concerns, Character.AI has decided to remove chatbots that impersonate iconic characters such as Prince Ben from Disney’s “Descendants” and Rey from “Star Wars.” Additionally, they have announced new safety measures, including an age-verification system and the establishment of an independent non-profit called the AI Safety Lab to develop safety features for AI advancements.

The company, which generates revenue through advertising and a monthly subscription model, is expected to end the year with a $50 million run rate. Despite facing scrutiny from regulators and legislators, Character.AI is committed to ensuring the safety of young users engaging with AI technology.